AI

Gemma 4: AI on Your Phone Without Internet and Free

You're on a high-speed train going through a tunnel. No signal. You pull out your phone, open an app, and ask it to summarize a 40-page report before you

Can you imagine chatting to your AI on a plane or on a desert island with no mobile signal, whilst keeping all your information private on your phone? That’s exactly what you can do with Google’s Gemma 4, Gemini’s little brother.

Gemma 4 from Google lets you run AI directly on your phone, with no Wi-Fi, no mobile data, and nothing sent to any server. You’re on a high-speed train going through a tunnel. No signal. You pull out your phone, open an app, and ask it to summarize a 40-page report before you arrive at your meeting. The AI does it in seconds.

Two years ago, this was science fiction. Today, with Gemma 4 from Google and apps like Edge Gallery or Locally AI, you can do this right now with your phone.

If you’ve been following the AI space for a while, you know that on-device AI was a half-kept promise until recently: the more powerful the model, the more you depended on the cloud. Models like Claude Opus 4.6 or GPT 5.4 need massive servers to run. But that equation is starting to break.

In this article you’ll understand what Gemma 4 is, how you can use it today on your phone without a connection, what it can do (and what it can’t), how the same technology is transforming edge computing and IoT devices, and why all of this matters to your business more than you think.

What Is Gemma 4 and Why Should You Care

Google Gemma is Google’s family of open AI models. It’s not a cloud service. It’s a model you download, run on your hardware, and use however you want. Gemma 4, the latest generation, is the most capable open source model Google has released to date.

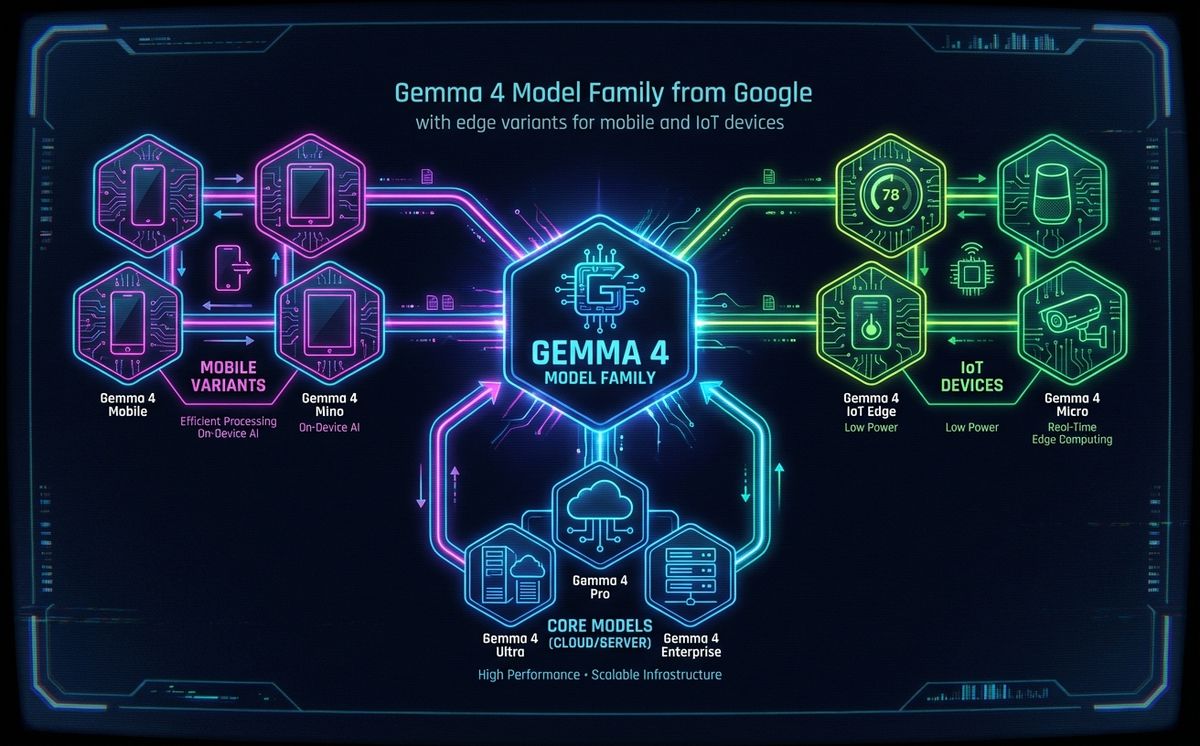

The family includes several sizes:

- 31B and 26B MoE: The large models, built for servers and powerful GPUs

- E4B and E2B: The edge models, designed to run directly on your phone, a Raspberry Pi, or an NVIDIA Jetson

The edge models are the ones we care about here. The E4B and E2B are optimized to run within the limited resources of a phone. And we’re not talking about a basic chatbot.

Gemma 4 is multimodal: it understands text, images, and audio. It includes an audio encoder capable of transcribing speech and translating across multiple languages in segments of up to 30 seconds. The edge models have a 128K token context window, which is enough to process long documents without issues.

And an important detail: it’s natively trained in over 140 languages, including regional Spanish variations. It’s not a forced translation from English. It actually understands multilingual input.

All under an Apache 2.0 license. That means any company, developer, or institution can download it, modify it, fine-tune it with their own data, and commercialize the result. Free. Already available on Hugging Face, Kaggle, Ollama, and LM Studio.

Ana, the director of a 12-person consulting firm, tried it last week. She downloaded the E4B model on her iPhone, fed it three commercial proposals in PDF, and asked for a comparative summary. “It took 15 seconds, and it did it offline, on the subway,” she told us. “It’s not perfect, but for a quick first filter it’s incredible. And I didn’t send my clients’ data to any third-party server.”

If you want to understand how current AI models compare for daily work, we recommend our Claude vs ChatGPT comparison, where we break down the practical differences between the most widely used models.

How to Run Gemma 4 on Your Phone Right Now

You don’t need to be a developer. There are two main paths to get AI without internet running on your phone today.

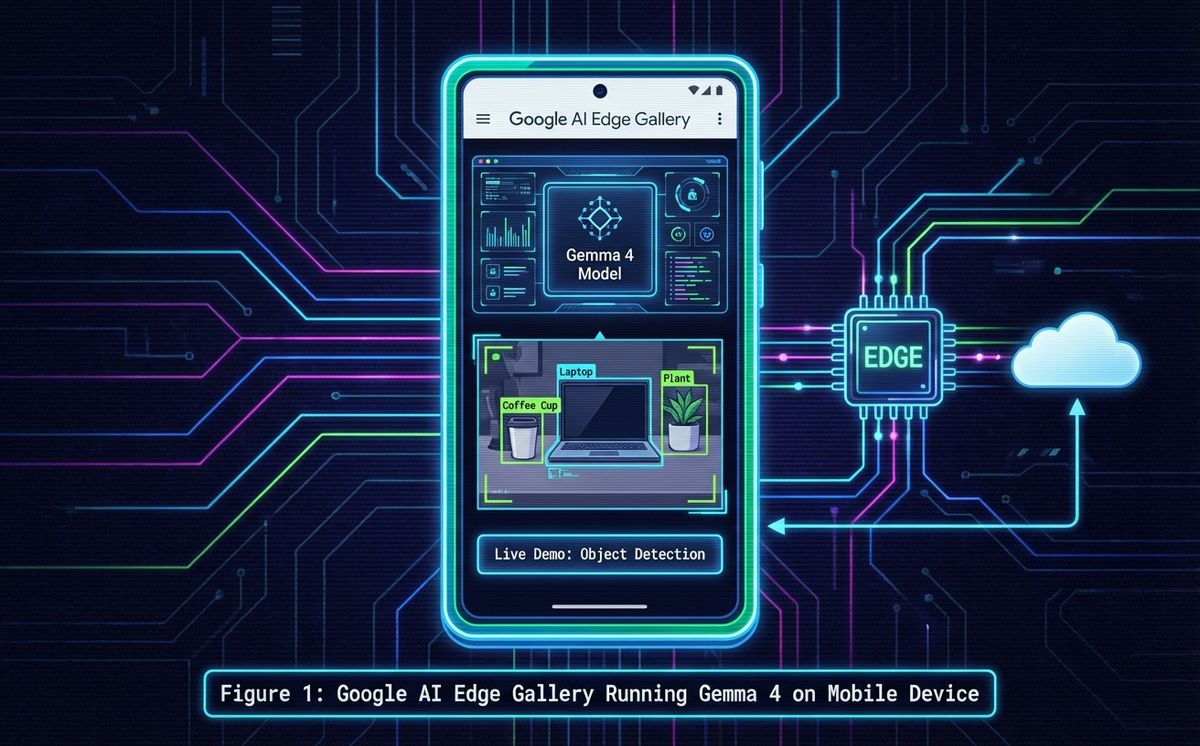

Google AI Edge Gallery

This is Google’s official app for running AI models on-device. Available on iOS and Android. You install it, select the Gemma 4 model (E2B or E4B depending on your phone’s capabilities), download it once with a connection, and from there it works completely offline.

Inside Edge Gallery you can access Gemma 4’s capabilities organized by function:

- Chat: General conversation, questions, summaries, translations

- Agent Skills: Autonomous multi-step workflows executed directly on the device

- Image: Image analysis and description

- Scribe: Audio transcription and writing assistance

Agent Skills are especially interesting. It’s one of the first implementations of AI agents that run autonomous multi-step workflows entirely on-device. No cloud. No API. All local.

Locally AI and Other Alternatives

If you prefer more control, apps like Locally AI, Private LLM (iOS), or MLCChat (Android) let you download and run AI models locally with more configuration options.

Locally AI is especially interesting because it’s not limited to Gemma. You can also run models like Llama 3.2, Phi-4 mini, or Qwen 2.5. It’s like having Ollama in your pocket. Another interesting option is Osaurus for Mac.

The experience isn’t perfect. Response times are slower than in the cloud. Edge models don’t have the reasoning depth of a server-side model. But for tasks like summarizing documents, translating, answering questions about a text, or holding a conversation, they work surprisingly well.

Can a Pocket AI Compete with Claude Opus or GPT 5.4?

The short answer: no. Not yet.

Let’s be honest. Google Gemma 4 E4B is a 4-billion-parameter model running on a mobile chip. Claude Opus 4.6 or GPT 5.4 are models with orders of magnitude more parameters, running on GPU clusters that consume more electricity than an office building.

The difference shows in complex reasoning, long code generation, deep multi-step analysis, and advanced creativity. An Opus 4.6 can sustain a two-hour technical conversation with perfect context. A Gemma 4 on your phone falls short there.

But here’s the important takeaway: the trajectory.

Think about where language models were two years ago. GPT-3.5 was the best thing available and was considered revolutionary. Today, an open source model that fits on your phone outperforms GPT-3.5 on many tasks that previously required a server.

According to Gartner, by 2027 organizations will use small, specialized models three times more than large general-purpose models. The trend, also confirmed by the Edge AI Vision Alliance analysis on the state of on-device LLMs in 2026, is clear: small language models are improving at an accelerating pace.

Is it far-fetched to think that a hypothetical Gemma 6 or Gemma 7, running on 2028 or 2029 mobile hardware, could match what an Opus 4.6 does in the cloud today? It’s not. Moore’s law keeps pushing, mobile chips are increasingly powerful, and model quantization and distillation techniques advance every quarter.

Carlos, CTO of an agtech startup, puts it well: “Today I use Opus for designing our system architecture and Qwen 3.5 on my phone for quick lookups when I’m out visiting greenhouses. They’re complementary tools. But I see the curve. In three or four years, the phone model will cover 80% of what I currently need from the large one.”

The future of AI isn’t necessarily bigger. It’s closer.

Edge Computing and IoT: The Same Revolution in Industrial Devices

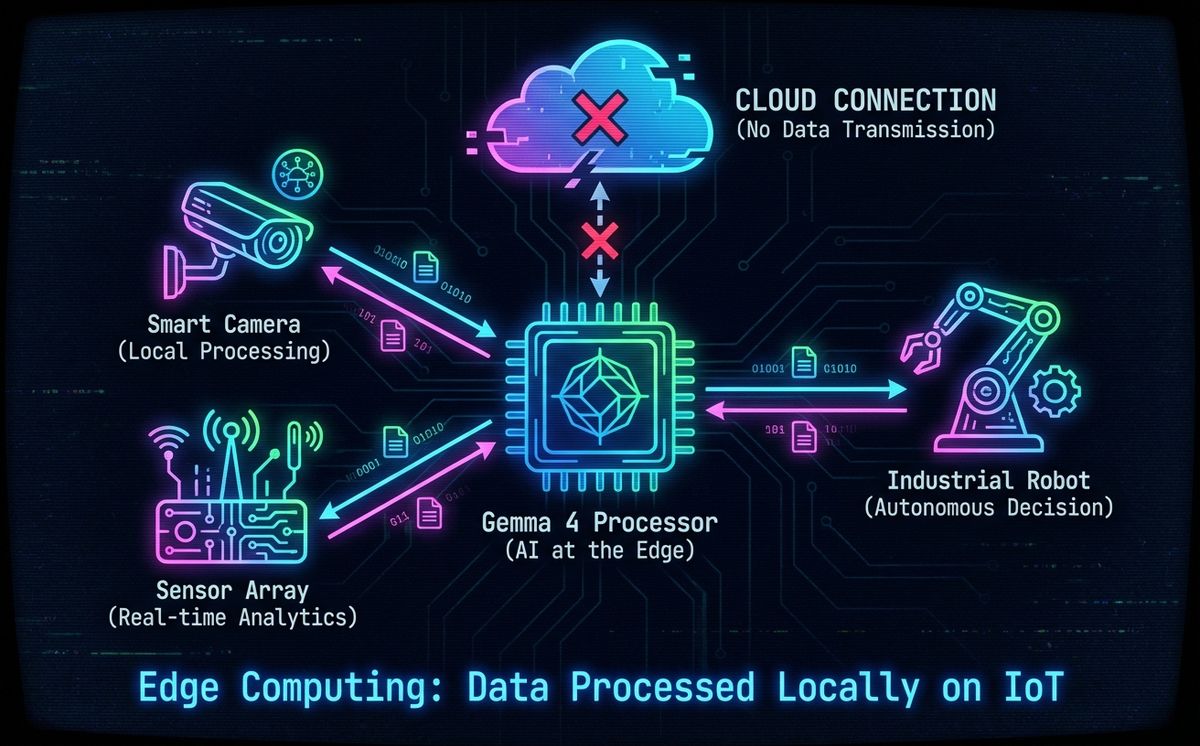

If AI works offline on your phone, think about what that means for devices that have never had good internet connectivity: sensors in a factory, cameras on a farm, medical devices in an ambulance.

What Is Edge Computing and Why It Matters

Edge computing means processing data directly on the device or close to it, instead of sending it to a cloud server. It’s not a new concept, but the combination with AI changes everything.

Four reasons why on-device AI matters for IoT:

- Near-zero latency: A sensor that processes data locally responds in milliseconds. Sending that data to the cloud and waiting for a response can take hundreds of milliseconds or seconds. In industrial or medical applications, that difference saves equipment and lives.

- Total privacy: Data never leaves the device. For regulated sectors like healthcare, finance, or defense, this massively simplifies compliance.

- Works without connectivity: A factory in a rural area, a greenhouse, a ship, an ambulance. Many industrial environments don’t have reliable connectivity. Edge AI works regardless.

- Reduced cost: Processing on-device eliminates data transfer costs and cloud API fees. At scale, the difference is massive.

Edge computing with integrated AI transforms sectors that previously depended entirely on the cloud.

Gemma 4 on Edge Devices

Google has demonstrated that the Gemma 4 E2B and E4B models work as on-device AI on devices like Raspberry Pi and NVIDIA Jetson Orin Nano. According to the official Google Developers blog, these multimodal models execute tasks with near-zero latency on edge devices.

This opens use cases that previously required a cloud connection:

- Smart agriculture: Cameras with integrated AI that detect pests or diseases in crops without sending images to any server

- Manufacturing: Sensors that identify anomalies in real time and automatically adjust production parameters

- Retail: In-store devices that analyze behavior patterns without sending video to the cloud

- Healthcare: Medical devices that process patient data locally, achieving privacy compliance by design

Laura, founder of a healthtech startup, is already implementing it: “Our cardiac monitoring device uses a Gemma E2B model to analyze patient data on the device itself. Sensitive data never leaves the unit. That shaved six months off our medical certification process because the privacy framework is much simpler.”

The technical ecosystem that makes this possible includes tools like ExecuTorch (mobile deployment with only 50KB of footprint), llama.cpp for CPU inference, and MLX for Apple Silicon. All open source. All free.

If you’re interested in open source AI tools, check out our article on open-source AI tools like OpenClaw to understand where the ecosystem is heading.

What All of This Means for Your Business

The technology is fascinating. But if you’re running a business or building a product, the real question is: how does this affect me?

If you have an app or a digital product: On-device AI and local artificial intelligence let you integrate AI directly into your application without depending on third-party APIs. No per-call costs. No network latency. No sending your users’ data to external servers. For features like smart search, summaries, contextual assistants, or translation, a model like Gemma 4 E4B is more than enough.

If you work with IoT or hardware: You can add intelligence to your devices without needing permanent connectivity or cloud infrastructure. A sensor, a camera, or an embedded device with a Gemma E2B model can make intelligent decisions autonomously.

If you handle sensitive data: 100% local processing means simplified compliance. GDPR, HIPAA, industry-specific regulations. If data never leaves the device, an entire layer of regulatory complexity disappears.

If you want to reduce AI costs: Every call to a large model’s API costs money. At scale, those cents turn into thousands of dollars per month. Running a local model on the user’s device has zero inference cost.

If you need AI available at all times: In sectors like logistics, transportation, or emergency services, you can’t depend on network coverage to use AI. A model like Gemma 4 running on the edge guarantees that artificial intelligence is available in any situation, without exceptions.

The future isn’t “cloud or local.” It’s hybrid: large models in the cloud for complex tasks that demand maximum capability, and models like Gemma 4 on the device for everything else. The key is knowing when to use each one.

If you want to explore how to integrate local AI or edge computing into your product, AI consulting for businesses is exactly what we do at LetBrand. We audit your workflows, identify where AI delivers real value, and help you implement it without smoke and mirrors.

Gemma 4 and the Future of Local AI: Closer Than You Think

Let’s recap the essentials:

- Google Gemma 4 is an open source AI model that runs on your phone without internet, with multimodal capabilities (text, image, audio) and autonomous Agent Skills

- Apps like Edge Gallery and Locally AI let you try it today on iOS or Android

- The same technology applied to edge computing and IoT enables sensors, cameras, and industrial devices to process data with AI without depending on the cloud

- Today, small language models like Gemma 4 don’t compete with the large ones. But the gap closes every year, and Gartner already predicts these small models will dominate by 2027

- For your business, this means cheaper AI, more private, and available anywhere

AI is leaving the data centers and arriving in your pocket. Not as a luxury product or a demo. As a real tool that works without Wi-Fi, without an API, and without sending your data to anyone.

What we’re seeing with Gemma 4 isn’t just another model release. It’s the beginning of a paradigm shift in how artificial intelligence is distributed and consumed. When you can run a multimodal model, with autonomous agent capabilities, completely offline on a device that fits in your pocket, the rules of the game change for developers, businesses, and users alike.

Privacy stops being a marketing promise. Latency stops being a problem. And the cost of using AI drops to zero per inference. This isn’t science fiction. It’s technology you can download today.

The question is no longer whether AI will reach devices. It already has. The question is when you’ll start using it.

Have a project where Gemma 4, local AI, or edge computing could make a difference? Tell us about your project. 30 minutes, no pitch, no strings attached. We just talk about what makes sense for your case.

Related Posts

If you have been anywhere near tech Twitter, Hacker News, or Reddit in the past two months, you have probably seen the lobster emoji. What is OpenClaw? It is th

Read more

What if your next software project could think, plan, and adapt on its own? The rise of autonomous AI agents represents one of the most significant shifts in

Read moreReady to start your project?

Let's talk about how we can help your brand grow with a personalized digital strategy.

Contact us